Plan before implementing

Speed without direction is expensive iteration. How planning with AI changes the dynamic.

AI agents are great at building the wrong thing fast.

I learned this the hard way. Early on, I'd type "build feature X" and watch the agent work. Lines of code appeared. Files got created. Everything looked right. Then I'd look at what it actually built and realize: this solves a problem I didn't have.

The code was fine. The approach was wrong.

The assumption problem

When you tell an AI agent to "build a user authentication system," it makes dozens of decisions without asking. Use a third-party service or roll your own? Session cookies or JWTs? Store tokens in cookies or localStorage? Support OAuth providers? Each choice has tradeoffs the agent can't know without asking.

Each assumption compounds. By the time you see the output, you're looking at a complete system built on foundations you never approved. The agent didn't make bad decisions. It made decisions you didn't make.

This is the trap. AI moves fast. You see progress and feel productive. But speed without direction is just expensive iteration. Every hour spent fixing wrong assumptions is an hour you could have spent building the right thing.

Planning changes the dynamic

The fix isn't to slow down the AI. It's to front-load the thinking.

Before the agent writes a single line of code, I now ask for a plan. What should this feature do? What approach will it take? What edge cases matter? What files will it touch?

The plan becomes the specification. Not a vague idea in my head, but a concrete document I can read, edit, and approve before any implementation begins.

This isn't new. Developers have always planned before implementing. But AI changes how planning works. The agent can help identify gaps I missed. It can research the codebase and suggest approaches I hadn't considered. Planning becomes collaborative. And it gets better when the agent already understands your patterns through well-structured rules and has access to current documentation.

How this works in practice

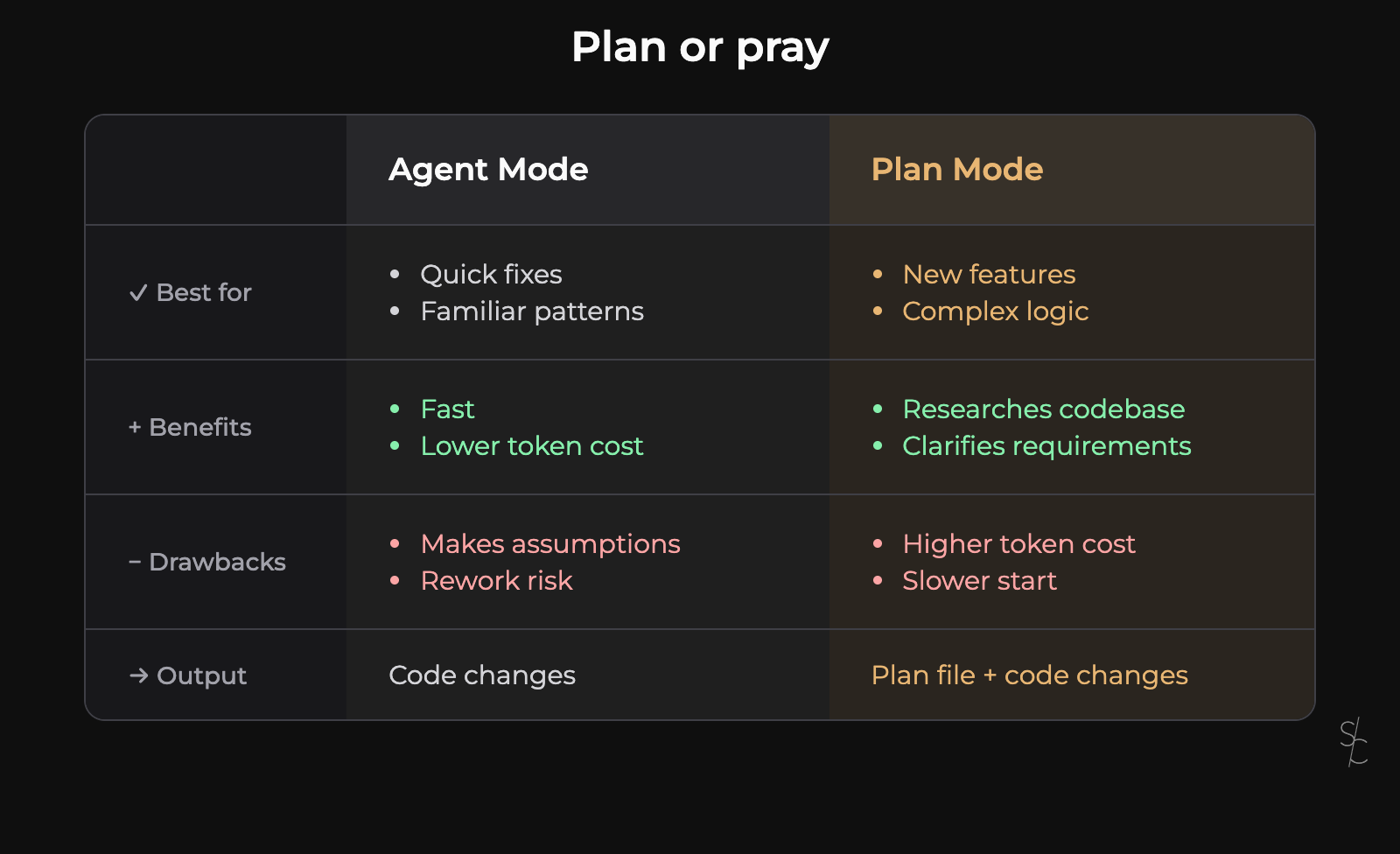

Cursor has a dedicated Plan Mode that makes this concrete. Press Shift+Tab to switch from Agent Mode to Plan Mode. Instead of jumping straight to implementation, the agent does something different.

First, it asks clarifying questions. The kind a colleague would ask during a plan review. What exactly should this feature do? Are there constraints I should know about? How should it handle edge cases? This alone catches misunderstandings before they become code.

Then it researches your codebase. It finds relevant files, existing patterns, related implementations. Context that would take you minutes to gather, assembled in seconds.

Finally, it produces a plan. A markdown file listing what it will build, how it will approach each part, and what order it will work in. You can read this plan. Edit it. Cut unnecessary steps. Add context the agent missed. Refine the approach until it matches what you actually want.

Only then do you click build.

When things go wrong

Even with a good plan, sometimes the implementation doesn't match expectations. The agent interprets something differently than you intended, or an edge case surfaces that nobody anticipated.

Here's where planning pays off again. Instead of trying to fix broken code through follow-up prompts, you go back to the plan. Revert the changes. Refine the plan to be more specific about what you need. Run it again.

This sounds slower, but it's faster. Patching broken code creates layers of fixes on top of wrong foundations. Refining the plan and rebuilding produces cleaner results. The plan is your checkpoint.

I save plans to the workspace (under .cursor/plans). They become documentation. When I return to a feature later, I can see what was considered, what was decided, and why. Team members get the same context. Interrupted work is easy to resume.

Knowing when to skip

Not every task needs a plan.

Quick fixes, familiar patterns, small changes in well-understood code. These can go straight to Agent Mode. If you know exactly what you want and it takes less than a few minutes, planning adds overhead without value.

But for anything new or complex, planning pays off. A few minutes upfront saves hours of rework. New features, complex logic, architectural decisions, anything with multiple valid approaches. These deserve a plan.

The deciding factor is cost. When a wrong assumption means hours of rework, you plan first.

The real skill

AI changed what it means to write software. I spend less time typing code and more time thinking about what to build. The syntax matters less. The architecture matters more.

Planning is where that thinking happens. Not in some abstract document nobody reads, but in a concrete artifact that directly shapes what gets built. The plan is the lever. Get it right, and the AI amplifies your intent. Get it wrong, and the AI amplifies your confusion.

The skill isn't prompting. It's thinking clearly about what you want before you ask for it.

AI accelerates execution. You still own the direction.

More from the blog

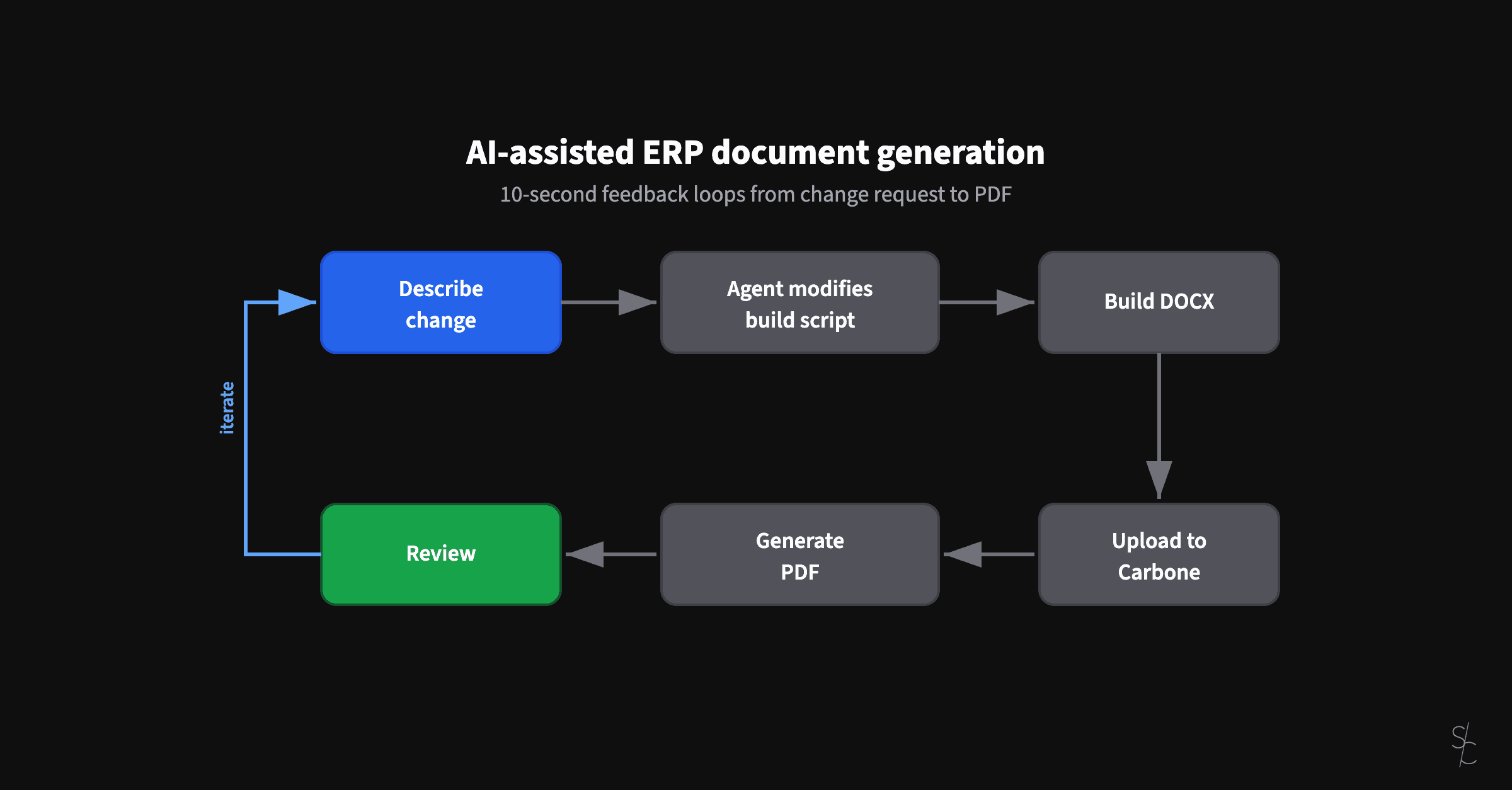

AI-assisted ERP document generation

ERP documents look simple. Underneath, they're conditional logic puzzles that have resisted modernization for decades.

Subagents replaced my /code-review command

Rules tell agents what to do. Subagents verify they actually did it.

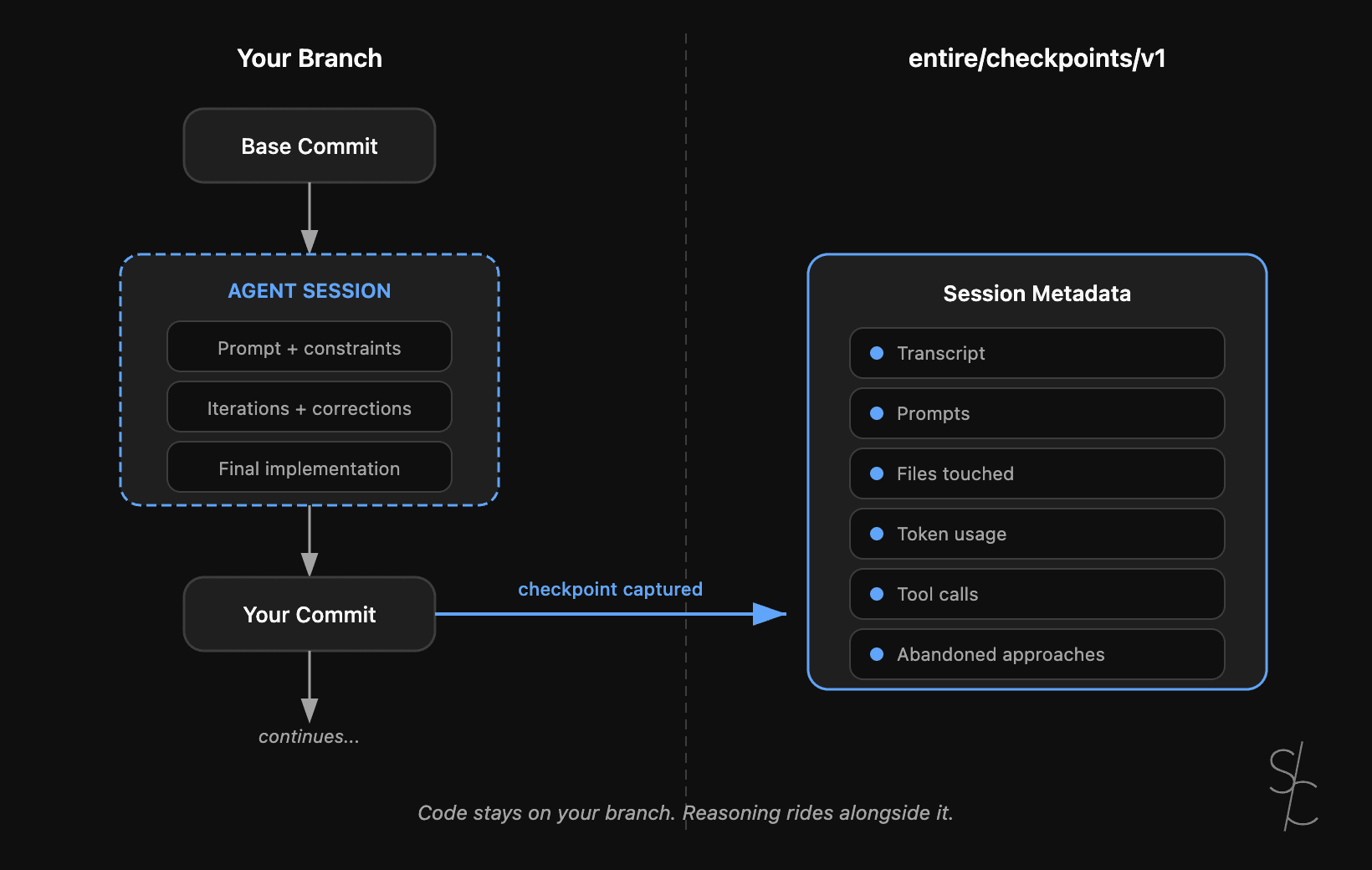

Agent context is the new technical debt

Git tracks what changed. With AI-generated code, the reasoning behind it matters more. Entire makes that context durable.